Four recommendations for getting the most out of LLM: a message for top management

Fujitsu / March 22, 2024

Launched in November 2022, ChatGPT represents the unprecedented speed of adoption of generative AI as a new framework for value creation. The potential power of generative AI comes from large language models (LLMs), which could outperform conventional AI. LLM technology is rapidly evolving, with model performance improvements and enhancements leading to more advanced generative capabilities and business models. As the industry's understanding of LLMs continues to grow, it is also accelerating its efforts to integrate this technology into its operations and usher in a new era of value creation. In fact, the adoption of generative AI (LLM) in organizations has been identified as a top new technology investment strategy in many executive surveys.

In the Conventional AI adoption experience, many organizations get stuck in the proof of concept (PoC) and use case establishment phases. As a result, the management impact (contribution to management outcomes) has not been significant. This is also referred to as "pilot purgatory," where the project doesn't move forward, or "use case death," where the use case doesn't materialize. To ensure that generative AI doesn't end up as a fad, we've put together some key recommendations for Top managements based on what we've learned from our research.

Contents

- LLM Lifecycle Involving Value Creation

- Key Management Recommendations

- Recommendation 1: Leveraging LLMs as a new infrastructure that is evolving "humanly"

- Recommendation 2: Use LLMs to maximize management performance: From Top Line to Bottom Line

- Recommendation 3: Understand the complementary relationship between generative and conventional AI to make investment decisions

- Recommendation 4: Addressing potential mixed risks associated with LLM operations

LLM Lifecycle Involving Value Creation

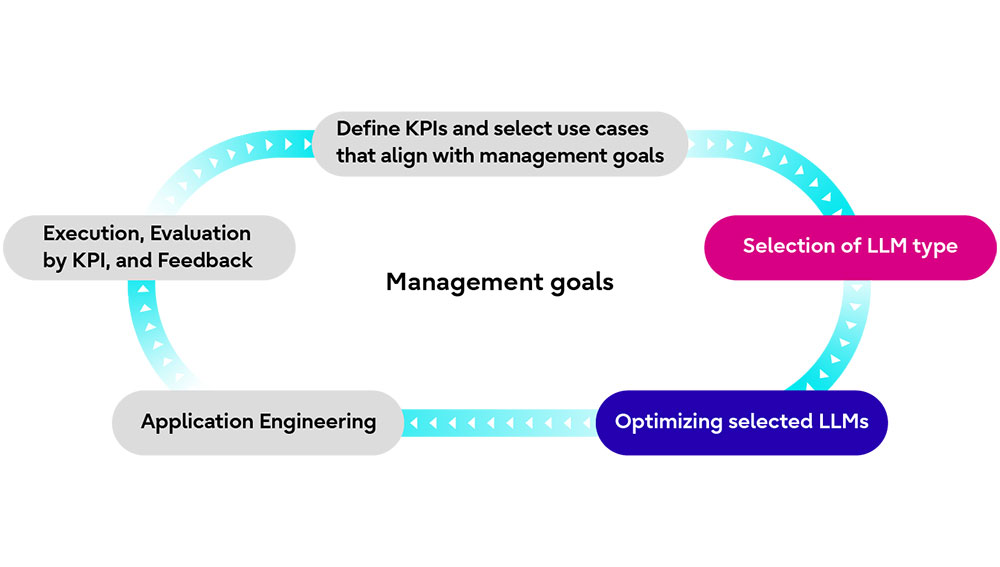

For enterprises, LLM is not just a new "toy," but a new foundation and platform for digital transformation. As Figure 1 illustrates, the process of creating value with LLM involves several specific and important steps. The LLM lifecycle includes the formulation of business goals and the identification of use cases, the selection and optimization of the appropriate model for the company, the development and implementation of the application, and the evaluation and improvement of the company's LLM based on KPIs.

Figure 1 LLM Lifecycle

We have already published an insight paper, "Generative AI: Use Cases as the Pathway to Value Creation," which provides insights into setting KPIs and identifying use cases. We also published an insight paper, "Leveraging the LLM: Strategy from Model Selection to Optimization," which presents three options for model selection and provides insights into two basic approaches to model optimization (broadly defined): context optimization and LLM optimization (narrowly defined).

Key Management Recommendations

From a technical perspective, the LLM lifecycle described above outlines the key steps in creating value with LLM. From a management perspective, all processes (lifecycles) involving LLMs should be executed in accordance with the company's value creation goals. This means that top management needs to take a higher perspective, look at the entire LLM lifecycle, focus on value maximization, and understand LLMs. Maximizing the potential value of LLM depends on management capabilities.

Recommendation 1: Leveraging LLMs as a new infrastructure that is evolving "humanly"

LLMs, unlike Conventional AI, do not address each step of the business (individual tasks), but instead becomes an intelligent engine that can address multiple steps of the business at once, transcending machines and becoming a new "human" evolving infrastructure. This is an evolution from traditional physical infrastructure and software as a machine.

LLMs are a driver of value creation, participating in the value creation process through use cases that businesses can feel. This "human" infrastructure is impacting all industries, making them more accessible and creating a productivity revolution for people. The level of automation has also evolved from Conventional AI RPA to EPA to CA. In other words, where humans were previously directly involved in processes and decisions (in the loop), the AI that powers LLM will take control, and humans will act as observers and final approvers (on the loop). In addition, LLMs have the potential to augment human innovation capabilities and navigate the future of business.

Recommendation 2: Use LLMs to maximize management performance: From Top Line to Bottom Line

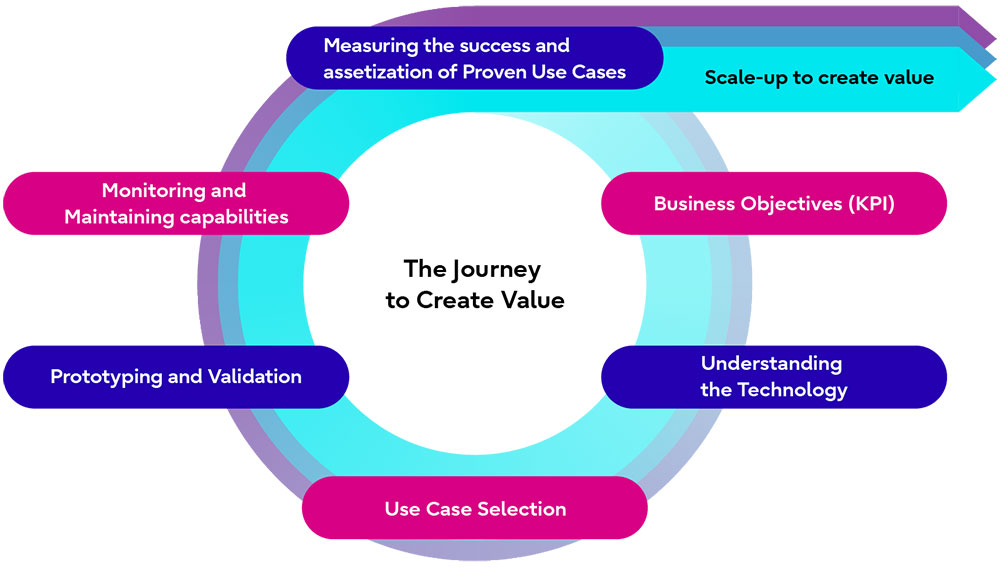

As Figure 2 shows, the implementation of generative AI (LLM) requires validation and validation of PoC and use cases in the new value creation process. However, technologies, know-how, and use cases (such as architecture) that have been proven through repeated practice require rapid scale-up through capitalization. During the scale-up phase, it is possible to skip the PoC and use case demonstrations and move directly to implementation.

To maximize management performance, it is important to take an approach that simultaneously achieves the top line (growth target) and the bottom line (profit target). Otherwise, you may lose support in your organization and your company's growth may stagnate.

Figure 2 Maximize generated AI performance with effective stepping and scaling

Recommendation 3: Understand the complementary relationship between generative and conventional AI to make investment decisions

The greatest advantage of generative AI is its creativity and adaptability. Its creativity and flexibility allow it to efficiently perform tasks that conventional AI is not good at, such as generating sentences and summarizing information, generating soft code, and automating intelligent tasks. For example, companies can quickly generate new ideas and incorporate them into their products and services. This allows companies to increase productivity and create new value.

Conventional AI, on the other hand, has relatively predictable behavior and consistent results (predictability and consistency). As a result, it provides high accuracy for specific tasks and increases productivity. As a result, organizations can automate routine work and help employees focus on more advanced tasks.

When choosing an investment for an organization, the first step is to define the organization's needs and goals, evaluate the cost and ROI of the investment, and then choose which AI, or a combination of both, to invest in given technical constraints and resources, risks, and regulations. Therefore, the best choice of AI for an organization depends on its goals and needs. Traditional AI and generative AI have different advantages, and often the best results are achieved by combining the two.

Recommendation 4: Addressing potential mixed risks associated with LLM operations

As the use of LLMs continues to grow rapidly, an organization's over-reliance on LLMs can create a new risk of disruption to the business itself in the event of a system accident or LLM failure. In fact, it has been reported that major generative AI companies have experienced system failures. (*1) In contrast, some advanced companies have taken measures such as implementing multiple models. (*2)

In addition, using commercial LLMs as a core part of external solutions can undermine your competitive advantage. This is because commercial LLM can be used by anyone in the competitive market (even competitors) and is not a moat (competitive advantage or corporate strength) like patents or know-how.

Finally, external risks can be captured within LLMs when using external services via RAG or API. Internalizing external risk can be a serious problem, especially as we expect to see more external services via APIs in the future.

(*1) OpenAI Shut Down ChatGPT to Fix Bug Exposing User Chat Titles

(*2) Jasper (2023) “Jasper vs. ChatGPT: Which is Right for You?”

Related information

- Leveraging the LLM: Strategy from Model Selection to Optimization(2024)

- Generative AI: Use Cases as the Pathway to Value Creation(2024)

- Transformative Quantum Computing: Striving for Greater Heights in Pursuit of Steady Progress (2023, Co-author)

- Transforming Supply Chains to Be More Productive, Resilient, and Sustainable (2023)

Editor's Picks